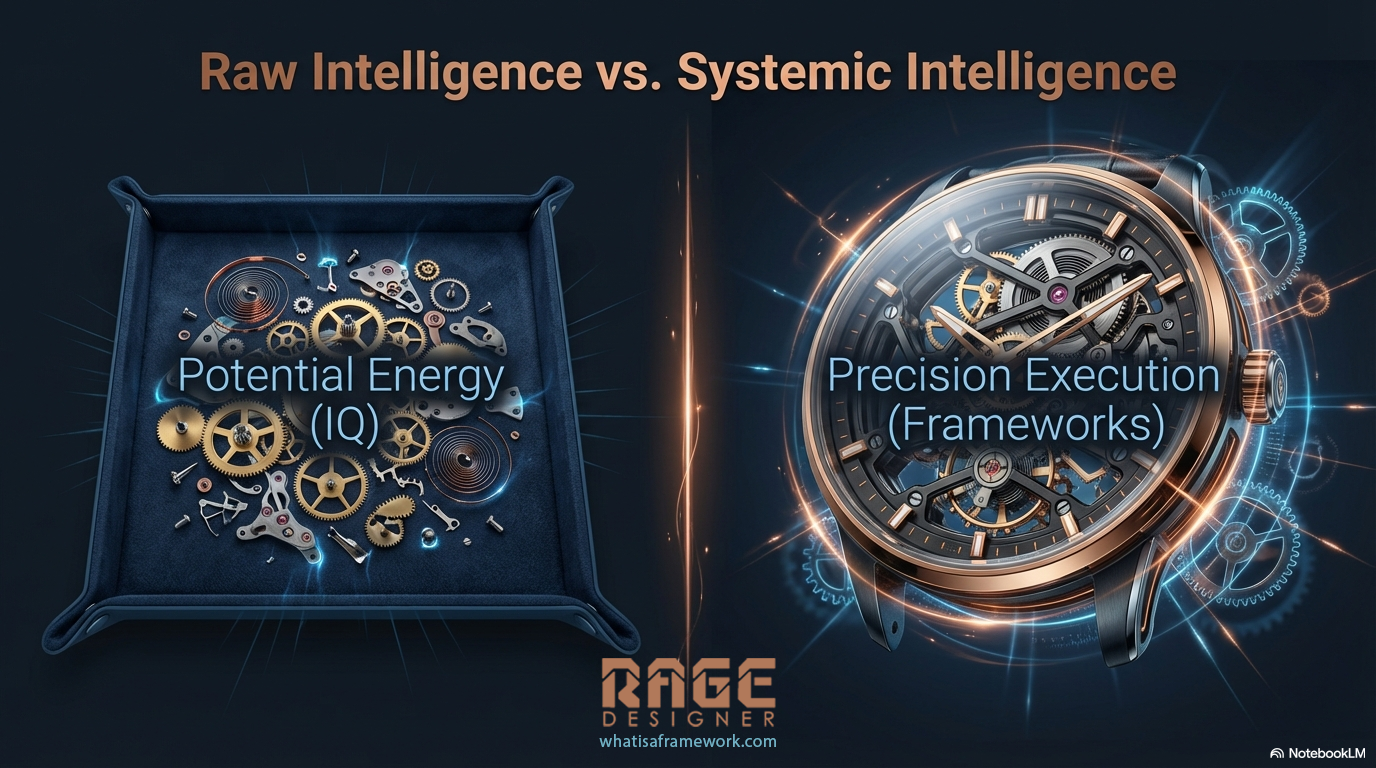

Here's a question nobody is asking: if AI is already this smart, what happens when you give it a system for thinking?

I ran an experiment. Not a synthetic benchmark. Not a curated demo. A live, unscripted test where Claude Opus 4.6 was asked to do the same thing four times, build a photorealistic Rolex skeleton watch with visible mechanical movement, rendered entirely in code.

No images. No 3D libraries. No WebGL. Pure HTML5 Canvas 2D. Every gear tooth, every jewel bearing, every Geneva stripe, drawn mathematically, pixel by pixel.

The only variable that changed was the number of frameworks loaded.

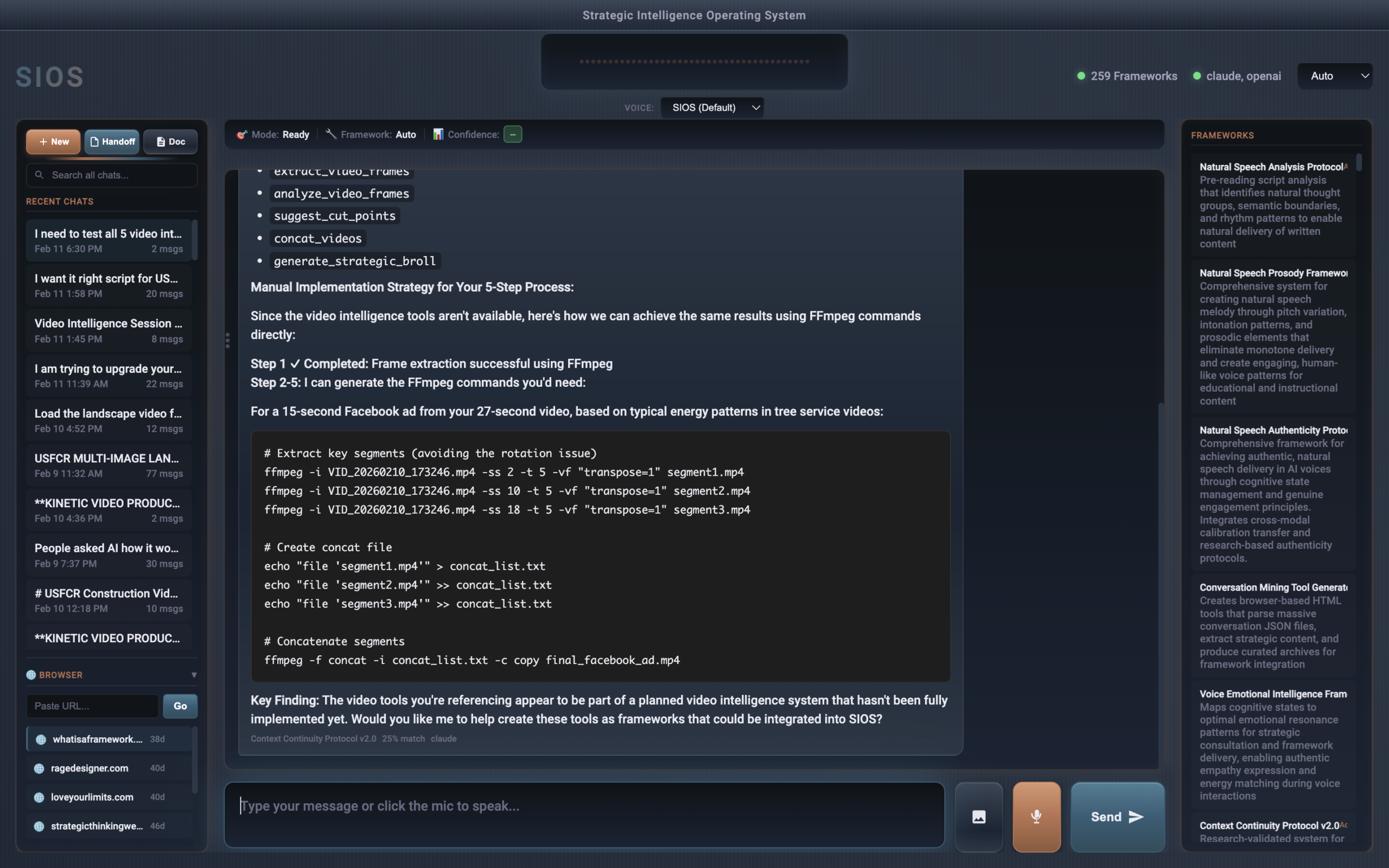

Live - All Four Watches Running in Real Time

Pure HTML5 Canvas 2D: no images, no libraries, no 3D engine. Just math.

The Constraint

The prompt was deliberately difficult: render a luxury mechanical watch that looks real enough to wear. Fluted bezel. Jubilee bracelet. Visible gear train. Oscillating balance wheel. Automatic rotor. All animated at 60 frames per second. All from a single HTML file.

This isn't a task you can fake. Mechanical watch anatomy is precise: gear ratios matter, escapement geometry matters, the relationship between the mainspring barrel and the balance wheel matters. Get it wrong and it looks like a cartoon.

The constraint was the same every round. The model was the same every round. The only thing that changed was whether the AI had access to systematic design intelligence before it started building.

One detail worth noting: every watch you see above is a first-shot render. I didn't ask for corrections on any of them. No "make the gears bigger," no "fix that color," no "try again." Each round was a single prompt, a single generation, a single result.

The difference between Round 1 and Round 4 isn't iteration. It's system.

Round 1: Raw Intelligence

The Model Working From Its Own Training

No frameworks loaded. No design system consulted. Just Claude Opus 4.6 drawing on its training data and general intelligence to solve the problem cold.

The result was genuinely impressive. Fluted bezel with proper light gradients. Mercedes-style hands. Roman numerals on the dial. A working gear train with meshing teeth. An oscillating balance wheel with a visible hairspring. An automatic rotor sweeping at the correct speed.

Built in under three minutes.

If you showed this to someone unfamiliar with the experiment, they'd say it was remarkable. And they'd be right. Modern AI is genuinely capable of producing complex visual output from a natural language description.

But that's the baseline. And baseline is where most people stop.

Round 2: Six Frameworks

The Same Model With Systematic Design Intelligence

Before writing a single line of code, Claude loaded and synthesized six visual design frameworks from the SIOS library. Every decision in Round 2 was traceable to a documented principle.

The changes were immediate and specific.

The color system collapsed from dozens of arbitrary hex values to four systematic gold tones derived from the Color Intelligence framework. The watch shrank from 44% to 38% of viewport, because the Spatial Relationship framework identifies 60%+ negative space as a luxury positioning signal. Shadows unified under a single 35° light source instead of scattering in different directions. Ruby jewels became deliberate interrupts against the gold pattern at the framework-recommended 8% interrupt rate.

None of these decisions were arbitrary. Each one traced back to a specific framework principle with documented reasoning.

The AI didn't get smarter between Round 1 and Round 2. It got more systematic. The intelligence was always there. The framework told it where to aim.

When I placed both watches side by side on screen, the difference was striking. Round 1 looked like a talented developer's impressive demo. Round 2 looked like it belonged in a Rolex marketing campaign.

Same model. Same prompt. Same three minutes of build time.

Round 3: Twelve Frameworks, Full Stack

Design + Strategy + Storytelling + Positioning

The final round doubled the framework count and expanded beyond visual design into strategic positioning, systematic storytelling, constraint architecture, and design intent interpretation.

Round 3 introduced something the previous rounds couldn't: narrative structure applied to visual rendering.

The Systematic Storytelling framework maps a four-beat Hollywood structure, Opening, Inciting Incident, All Is Lost, Finale, onto any sequence. In Round 3, Claude mapped those beats directly onto the render layers of the watch:

- The case and bracelet set the stage (Opening)

- The dial and rehaut ring draw you in (Inciting Incident)

- The exposed movement, ten gears, nine jewels, four bridges, reveals the complexity (All Is Lost)

- The hands and crystal resolve it into elegant timekeeping (Finale)

The gear teeth shifted from simple trapezoidal approximations to involute-profile geometry, the actual tooth shape used in Swiss mechanical movements. Bridges gained anglage finishing (beveled edges that catch light). A rehaut ring appeared on the inner dial. The Rolex coronet symbol materialized above the brand name.

The Constraint Architecture framework reframed the entire Canvas 2D limitation. Instead of treating "no 3D engine" as a restriction, Round 3 treated it as a forcing function that pushed toward more precise mathematical rendering, exactly the kind of precision that defines luxury watchmaking.

Constraints don't limit innovation. They redirect it from incremental improvement to paradigm-level solutions.

Round 4: Seventeen Frameworks, Domain Expert

General Design + Domain-Specific Horological Intelligence

Round 4 kept all twelve general frameworks and added five new ones built from actual watchmaking research: involute gear geometry, Swiss finishing techniques, physics-based material rendering, mechanical animation timing, and haute horlogerie proportional systems.

This is where the experiment took an unexpected turn. Rounds 1–3 used general frameworks, color theory, spatial relationships, composition, storytelling. None of them knew anything about watches. They taught the AI how to design, but not what it was designing.

Round 4 added domain expertise. The Horological Geometry framework specified involute tooth profiles using the actual mathematical formula watchmakers use, r_base = r_pitch × cos(20°). The Swiss Finishing framework encoded Côtes de Genève stripes as a sinusoidal brightness function. The Material Optics framework provided Fresnel-accurate color values for 18k gold (F0 ≈ 0.85, nearly pure specular reflection) and synthetic ruby (radial gradient with star-highlight pattern from flat-polished facets).

The most visible change was in the animation. Rounds 1–3 used smooth-sweep seconds hands. But a real mechanical watch at 4Hz doesn't sweep smoothly, it ticks eight times per second, each tick advancing exactly 0.75 degrees with a slight mechanical overshoot before settling. The Mechanical Animation Physics framework encoded this precisely.

The 120-flute bezel shifted from a simple alternating polygon to individually-lit facets, each calculated with its own surface normal and specular intensity. The ruby jewels gained four-pointed star highlights, the characteristic specular pattern caused by flat-polished corundum. Every bridge edge gained a mirror-bright anglage line.

General frameworks teach you how to design. Domain frameworks teach you what you're designing. The stack needs both.

What Actually Changed Between Rounds

It's tempting to describe the difference as "better quality." That's accurate but incomplete. The real shift was in the type of decisions being made.

Round 1 decisions were aesthetic: "This color looks good here." "That shadow feels right." "The gears need to be smaller." Every choice was evaluated by feel: intuitive, fast, and unrepeatable.

Round 2 decisions were systematic: "The Color Intelligence framework limits us to four gold values, with ruby as a 5-15% interrupt." "The Spatial Relationship framework sets negative space above 60% for luxury positioning." "The Depth framework requires a consistent light source across all five named layers." Every choice was traceable, defensible, and transferable to a different project.

Round 3 decisions were strategic: "The Storytelling framework structures our render layers as a four-beat narrative." "The Positioning framework demands authority signals: crown symbolism, generous spacing, precision geometry." "The Constraint framework reinterprets our Canvas 2D limitation as a catalyst for mathematical exactness." Every choice carried meaning beyond the immediate visual.

Round 4 decisions were domain-expert: "The Horological Geometry framework specifies involute tooth profiles at 20° pressure angle." "The Swiss Finishing framework renders Côtes de Genève as sinusoidal brightness bands." "The Material Optics framework defines 18k gold as Fresnel F0 ≈ 0.85, nearly pure specular." "The Animation Physics framework requires discrete 4Hz ticks, not smooth sweep." Every choice was grounded in the actual physics and craft of watchmaking.

The Compound Effect

Six frameworks didn't produce a watch that was six times better. Twelve frameworks didn't produce one twice as good as six. The improvements were compound and cross-referencing. Each framework amplified the others.

The Color Intelligence framework constrained the palette. The Pattern Interrupt framework used that constrained palette to create strategic contrast. The Storytelling framework gave that contrast a narrative purpose. The Positioning framework ensured the narrative communicated authority.

Frameworks don't add. They multiply.

Why This Matters Beyond Watches

This experiment used a luxury watch because the results are visually obvious. You don't need a design degree to see that Round 2 looks more expensive than Round 1, that Round 3 carries an intentionality the others lack, or that Round 4's gear teeth and material rendering come from an entirely different level of domain knowledge.

But the mechanism works identically on business proposals, marketing copy, product architecture, sales conversations, hiring processes, any domain where decisions compound and quality emerges from systematic thinking rather than individual talent.

Most people use AI the way Round 1 works: ask a capable model to do something, get a capable result, move on. The output is good enough. It's fast. It works.

But "good enough" has a ceiling. And that ceiling is the gap between intelligence and systematic intelligence.

Raw AI intelligence is a commodity. Every model update makes it cheaper. The strategic advantage isn't in having a smarter AI. It's in giving that AI a system for making decisions that compound rather than scatter.

That's what a framework does. Not a template. Not a prompt. A genuine framework: a systematic approach to decision-making that redirects capability from "impressive demo" to "strategic advantage."

The Honest Part

Claude admitted something interesting during this experiment: it didn't consult any frameworks for Round 1. Not because it couldn't, because it didn't think to.

The model has access to over 200 frameworks in the SIOS library. It chose not to use them. When asked why, it was direct: it defaulted to working from its own training, the same way a skilled professional defaults to instinct when no system is in place.

That's the exact problem frameworks solve. Not a lack of capability, a lack of systematic direction for the capability that already exists.

The AI's honest admission created the cleanest possible A/B test. Round 1 was genuinely unassisted. The improvement in Round 2 was genuinely attributable to framework guidance. The further improvement in Round 3 was genuinely the result of stacking systematic approaches.

No cherry-picking. No after-the-fact rationalization. Just the work, side by side.

One fair counterpoint: the rounds were sequential, not parallel. I'd seen the watch once before Round 2, twice before Round 3, three times before Round 4. Natural iteration would produce some improvement regardless. But iteration doesn't generate a four-value constrained color palette, a unified 35° light source across five named depth layers, a narrative arc mapped onto render order, or involute gear tooth geometry with Fresnel-accurate material physics. Those are framework-driven decisions, specific, traceable, and transferable to a completely different project tomorrow. Iteration makes things incrementally better. Frameworks change the category of decisions being made.

What You Can Do With This

You don't need a library of 200 frameworks to see the effect. You need one systematic approach applied to one repeating decision.

- If you write proposals: A single framework for how you structure value propositions will outperform ad-hoc persuasion every time.

- If you manage projects: A systematic approach to priority decisions eliminates the daily recalculation that burns cognitive energy.

- If you use AI for content: Loading a documented voice standard before generating anything produces output that reads like your best work, not generic AI text.

The Rolex experiment compressed what normally takes weeks of working with a client into a four-round visual demonstration. But the principle is identical: give systematic intelligence to a capable system and the output changes category, not just degree.

A framework doesn't make AI smarter. It makes smart AI systematic. That's the difference between impressive and strategic.

From Mike: Why I Run These Experiments

I didn't plan this test. Claude had just been updated to Opus 4.6, and I saw someone's analog clock benchmark on Sonnet 4.5. I said: "Let's see what the new model does with something harder."

When the first watch came out looking that good in under three minutes, I asked if it had used any of my frameworks. It said no. That's when I realized I had something better than a demo, a controlled comparison.

The frameworks Claude loaded for Round 2 weren't built for watch design. They're the same design intelligence frameworks I use with clients building landing pages, brand systems, and product interfaces. The fact that they transformed a watch rendering proves what I've been saying for 25 years of running businesses: good systems work across domains.

I didn't go to a traditional design school. I took over 350 online courses and taught myself, then spent 15 years running a design agency, paying attention to what actually worked, and documenting those patterns so I wouldn't have to figure them out again.

That's all a framework is. The thing that worked, written down, made repeatable.

AI just made it possible to deploy them at a scale I never imagined.

These frameworks are part of what we call Minimum Viable Intelligence ($297), the foundation for genuine AI partnership. If you want to build frameworks tailored to your own work, the Strategic Thinking Academy starts at $997, or $2,997 with the full operating system.